On April 13, 2026, the UK AI Safety Institute (AISI) published its evaluation of Claude Mythos Preview — announced just days earlier on April 7 — on a simulated 32-step enterprise attack called "The Last Ones" (TLO), a scenario that takes human experts roughly twenty hours to execute.

The model completed 22 of the 32 steps on average, and finished the entire attack end-to-end on 3 of 10 attempts. It is the first model ever recorded to do so.

The previous generation (Opus 4.6) averaged just 16 steps and had never finished.

Four numbers from the AISI study are worth sitting with:

The 73% figure matters because no model before April 2025 could complete expert-level CTFs at all. In under a year, that changed from "zero capability" to "passing grade."

The last number is the most alarming. Performance scales log-linearly with inference compute — and AISI observed no plateau. More money, more time, more tokens → more capability. There is no architectural ceiling in sight.

The Mythos report is not an outlier. Multiple independent bodies reached the same conclusion in the same quarter:

| Source | Finding |

|---|---|

| International AI Safety Report 2026 | "Current AI systems can already autonomously carry out some tasks involved in cyberattacks." Documents a real-world incident. |

| UK NCSC | Frontier AI offensive capability is doubling every 4 months. |

| Malwarebytes 2026 Predictions | "Fully autonomous ransomware pipelines" allowing small crews to attack many targets simultaneously. |

| PentestGPT v2 (academic) | 76.9% completion on HackTheBox Season 8 — top 100 of 8,036 active participants globally. |

| Hadrian survey | 70 open-source AI pentest tools cataloged by March 2026 — 65 of them launched in 18 months. |

Five different perspectives — governmental, academic, commercial, threat-intel — and all of them are pointing the same direction.

The NCSC's assessment is easy to glance past. It shouldn't be. Compounding at that rate produces numbers that defenders need to take seriously:

A vulnerability that takes an AI agent 8 hours to exploit today will take 7.5 minutes in two years if this trend holds. Meanwhile, enterprise patch cycles are still measured in weeks, and vulnerability-disclosure-to-exploit windows are already shrinking past the point of human response.

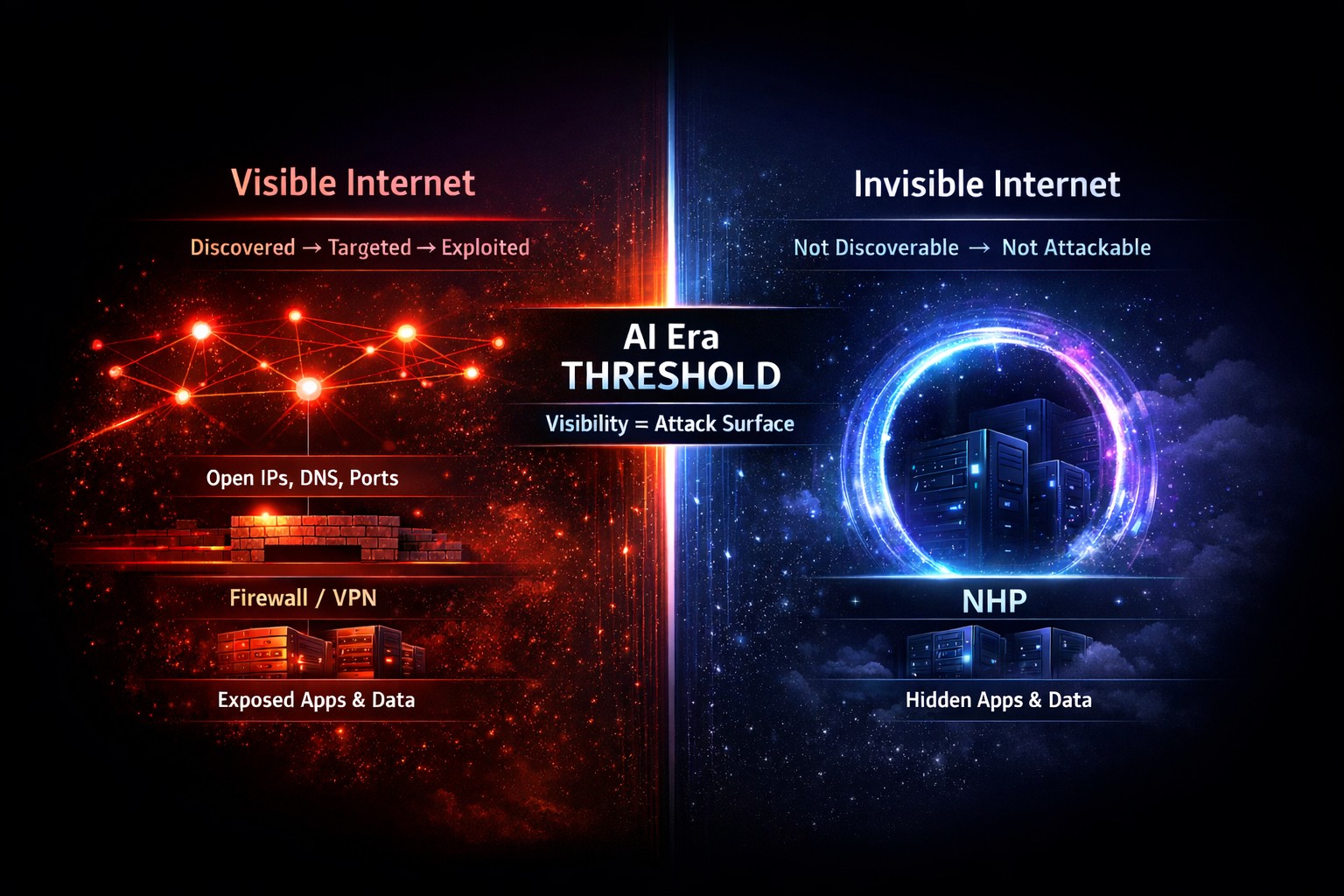

Modern security stacks — TLS, WAFs, firewalls, EDR, SIEM — were built around a fundamental assumption: services are visible, and defense begins at the handshake. You expose a port. You publish a DNS record. You terminate TLS. Then you authenticate.

Every layer of that stack assumes the first three facts are public. For a human attacker operating at human speed, that was tolerable — the reconnaissance cost something, and fingerprinting took effort.

For an AI agent running 24/7 at marginal token cost, that visibility is the attack surface. Every open port is free intel. Every DNS record is a map. Every TLS certificate leaks metadata. The AI doesn't need to defeat the lock — it studies the blueprint, the occupancy schedule, the supply chain, and the vendor's patch history, all in parallel, and picks the moment you're weakest.

Zero Trust correctly rejected the idea of implicit internal trust. Identity-centric authentication, continuous verification, least privilege — these are real improvements and OpenNHP does not displace them.

But most Zero Trust deployments still answer the door before checking who's knocking. The service is reachable. The port responds. The TLS handshake completes. Authentication happens after contact.

That ordering was fine in the human-speed threat era. In the autonomous-AI-speed era, it means attackers get to probe, fingerprint, enumerate, and fuzz before a single credential check. Which means they get to find the one bug that skips the credential check entirely — the exact class of flaw the Claude Code Security work proved AI can now find in bulk.

We wrote about this thesis earlier in "The Internet Is Becoming a Dark Forest". The AISI data turns that thesis from a literary metaphor into an operational requirement.

In a dark forest, every sound reveals location and every light attracts hunters. On the AI-era Internet, every exposed port reveals a target and every published DNS record attracts automated reconnaissance.

| Dark Forest | AI-era Internet |

|---|---|

| Light | Open port |

| Sound | IP address |

| Signal | DNS record |

| Hunter | Autonomous AI agent |

The full thesis is on the Vision page. In one sentence:

OpenNHP — the Network-infrastructure Hiding Protocol — implements Dark Forest defense at the session layer:

- Cryptographic knock before TCP handshake. An unauthenticated request does not get a SYN-ACK. It doesn't even get an ICMP unreachable. The service is not "filtered" — it is indistinguishable from nothing.

- NXDOMAIN for unauthorized DNS. Clients without a valid identity proof receive the same answer they'd receive for a non-existent domain. No fingerprint, no metadata, no attack surface.

- Default-deny at every layer. Domains, IPs, and ports are all hidden until identity is proven with modern cryptography (Noise Protocol, Curve25519, ChaCha20-Poly1305).

- Stateless and scalable. Built in memory-safe Go, benchmarked at 10K auth requests/sec with sub-100ms latency — so hiding doesn't come at the cost of performance.

When an autonomous AI agent scans an OpenNHP-protected environment, its reconnaissance returns nothing to pivot from. The 32-step TLO attack chain breaks at step one, because step one requires finding something to attack. There is nothing to find.

Read the protocol details on the Specification page. The draft is being standardized at the IETF and referenced in the Cloud Security Alliance's session-layer Zero Trust guidance.

OpenNHP is the open-source protocol. Deploying a protocol still means running servers, managing keys, and integrating clients. For teams that need Dark Forest defense without that operational lift, Qurl from LayerV is the product built directly on top of OpenNHP — usable out of the box.

Qurl's tagline captures the thesis exactly:

With a single API call, Qurl issues time-limited, self-destructing access links to your servers, APIs, and admin interfaces. Until one of those links is presented, the underlying infrastructure is invisible to scanners, crawlers, and AI agents. The reconnaissance step of the TLO attack chain returns nothing because there is nothing to return.

- Out-of-the-box NHP defense — no protocol integration work required

- Ephemeral access — every link has a lifetime and revokes itself automatically

- API-first — drop it into an existing CI/CD, support, or admin workflow with one call

- OpenNHP under the hood — same standards-track cryptographic foundation, same hiding guarantees

If the doubling trend the NCSC observed continues, the gap between "AI is getting worryingly good at this" and "AI does this autonomously at scale, cheaply, 24/7" is measured in single-digit quarters.

Three things follow from that:

- Visibility is no longer a neutral default. Every exposed surface is a subsidy to automated attackers.

- Detection-and-response alone won't scale. You cannot staff a SOC to match an attack agent that costs $0.50/hour to run.

- Network hiding becomes an architectural baseline. Not a niche control, not a premium feature — a default.

OpenNHP is one answer. It is open source, standards-track, and deployable today. The defender arithmetic only works if we change the inputs — and the one input we control is how much of ourselves we reveal.

Autonomous AI attackers cannot exploit what they cannot find.

OpenNHP hides your infrastructure by default.